Best prompt management platforms: Features, comparisons, and recommendations

The shift from experimental prompting to production-grade AI systems has created a genuine infrastructure gap. Teams that started with a few prompts in a text file now find themselves managing hundreds of variations across models, environments, and use cases - with no clear way to track what changed, why, or whether it actually improved anything.

This is where prompt management platforms come in. At PromptLayer, we've been active participants in the rapid maturation of this space, and the evolution from basic prompt storage to full lifecycle management reflects how seriously organizations are taking prompt engineering as a discipline. What follows is a practical breakdown of what these platforms offer, how the major players compare, and what actually matters when choosing one.

What prompt management platforms actually do

At their core, prompt management platforms treat prompts as first-class artifacts rather than strings scattered across codebases. This means structured storage, version control, testing infrastructure, and governance - essentially the same rigor you'd apply to application code.

A few key concepts show up across nearly every platform:

- Prompt library/registry: Centralized storage with metadata, tags, and rollback capabilities

- Versioning: New revisions when templates, parameters, or tool configurations change

- Prompt-as-code: Programmatic authoring via APIs and SDKs, enabling CI/CD integration

- Observability: Per-call traces capturing inputs, outputs, latency, and token usage

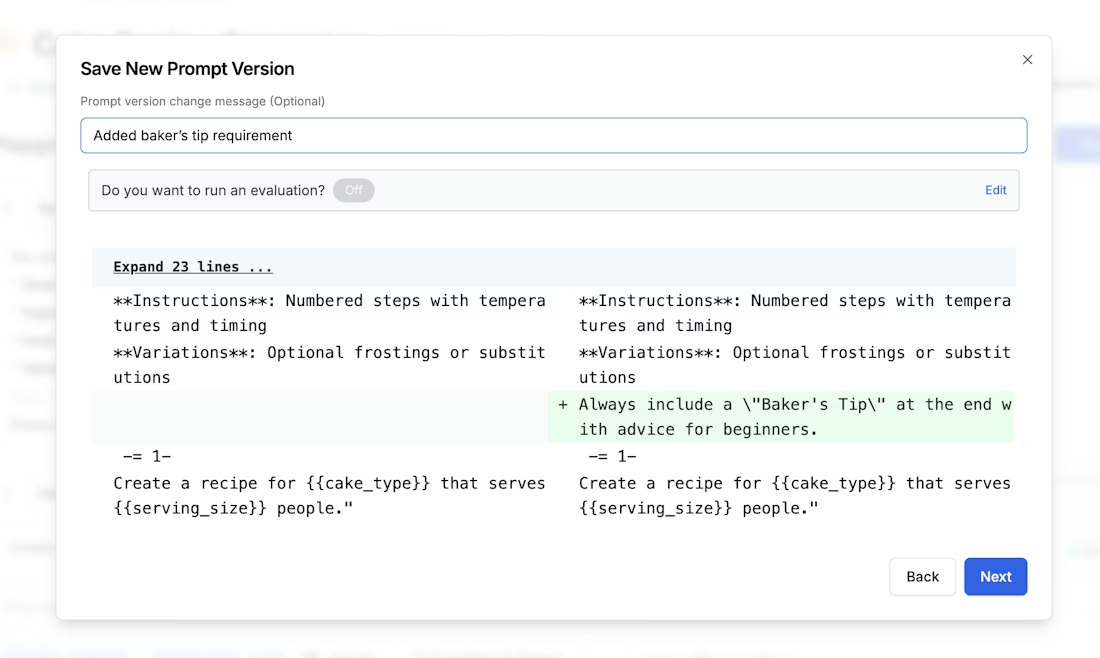

Side-by-side diff between two prompt versions, with additions in green and deletions in red

These capabilities integrate directly into AI engineering workflows - connecting retrieval systems, orchestrating model calls, running evaluations, and managing deployments. PromptLayer's REST API approach, for instance, lets teams plug prompt management into existing pipelines without wholesale architecture changes.

Features that separate the serious platforms

When evaluating platforms, certain capabilities reliably distinguish mature offerings from basic prompt storage.

Storage and versioning form the foundation. Look for environment separation (staging versus production), diff views between versions, and the ability to roll back without downtime. PromptLayer provides named environments with access controls, alongside side-by-side variation comparisons that make iteration auditable rather than guesswork.

Collaboration and access controls matter more than teams initially expect. RBAC, SSO integration, and audit logging become essential once multiple people touch prompts - especially in regulated industries. PromptLayer's shared workspaces and role-based controls are built for the point where prompt ownership extends beyond a single engineer.

A/B testing and evaluation are where prompt management earns its keep. Shipping a "better" prompt is easy; knowing whether it's actually better is the hard part. PromptLayer's A/B releases let teams split traffic between versions and measure real outcomes against defined metrics.

Security and compliance often drive platform selection in enterprise contexts. SOC-2 readiness, HIPAA options, encryption with customer-managed keys, and deployment flexibility (SaaS, in-VPC, self-hosted) all factor into procurement decisions. PromptLayer addresses this through enterprise compliance options alongside a hosted SaaS model with free tiers for getting started.

How the leading platforms compare

The landscape includes several distinct approaches worth understanding.

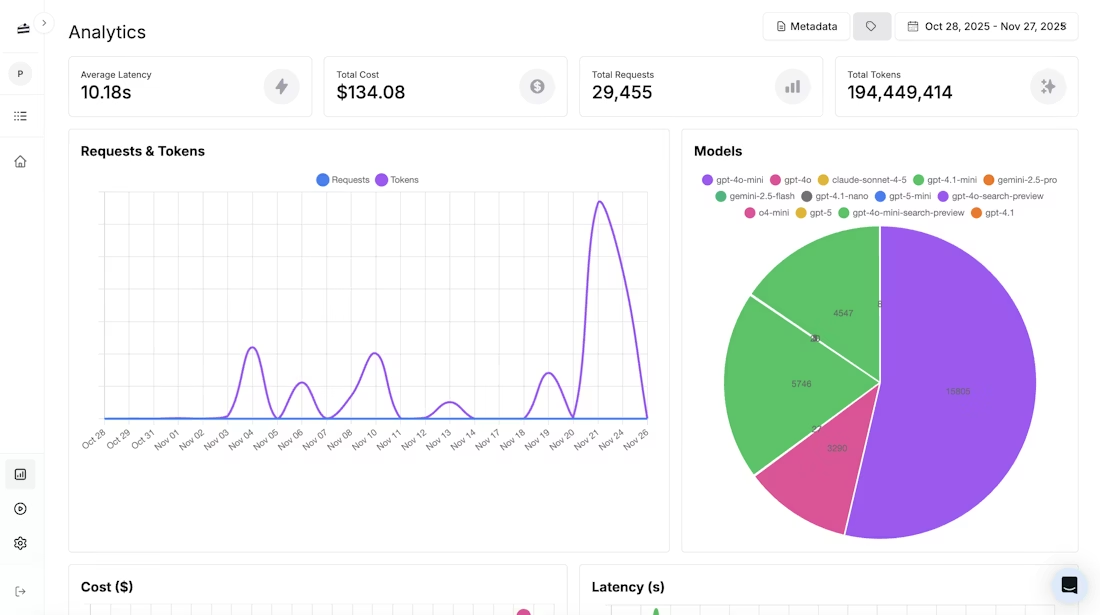

- PromptLayer provides logging, A/B testing, and analytics through a proxy architecture that works across providers - a deliberate design choice that captures every call without requiring teams to instrument them individually. The enterprise tiers include RBAC and compliance features, and the platform stays provider-agnostic across OpenAI, Anthropic, open-source models, and fine-tunes.

Analytics across prompts - cost, latency, and usage patterns, captured automatically

- LangSmith excels for teams already invested in LangChain. Native versioning, programmatic APIs for push/pull operations, and integrated tracing create a smooth experience - though the ecosystem bias can limit teams using other orchestration approaches. Trace-based pricing requires attention at scale.

- Portkey stands out for multi-provider support through a universal API. Gateway features like fallbacks (load balancing and semantic caching) address production reliability concerns. Enterprise compliance options exist, though pricing is custom.

- Humanloop offers clean prompt modeling with versioning triggered by template or parameter changes. Enterprise plans include SLAs and compliance options, though specific pricing requires vendor engagement.

- Open-source options (OpenLLM, Langfuse, Promptify) enable self-hosting and customization under permissive licenses. Teams gain control but assume operational responsibility - and enterprise features like RBAC require additional implementation work.

Matching platforms to actual needs

Different situations call for different tools.

For individual developers experimenting, lightweight hosted tools with free tiers provide simple interfaces without infrastructure overhead. PromptLayer's free tier fits here as well, with no commitment required to start.

Prompt engineers and ML teams benefit from platforms with robust versioning, traces, and evaluation pipelines. PromptLayer is built for exactly this workflow, and LangSmith and Langfuse also offer the experiment-oriented features this work requires depending on stack preferences.

Enterprises and regulated industries should prioritize RBAC, SSO, encryption options, and compliance documentation. PromptLayer's enterprise tier, Portkey, and AWS Bedrock with KMS all address these requirements - though in-VPC or self-hosted deployment options may be necessary depending on data residency constraints.

When running a proof of concept, define success metrics upfront (quality scores, latency targets, cost per task), run representative workloads, use A/B testing to compare versions, and verify governance controls before production promotion.

Pick a platform like you are shipping

The gap between a folder full of prompts and a system you can actually improve on purpose is wider than it looks, and the platforms worth using have mostly figured out the same loop: treat prompts like code, measure every call, test changes, and promote with guardrails.

So skip the checkbox shopping. Run a tight pilot with real workloads and clear metrics, stress the security and access model early, and pay attention to how the tool fits your architecture and team habits. If it makes iteration faster, safer, and easier to audit, you have your answer - ship with it and keep tuning.