What Is Agent Evaluation? A Practical Guide for AI Teams

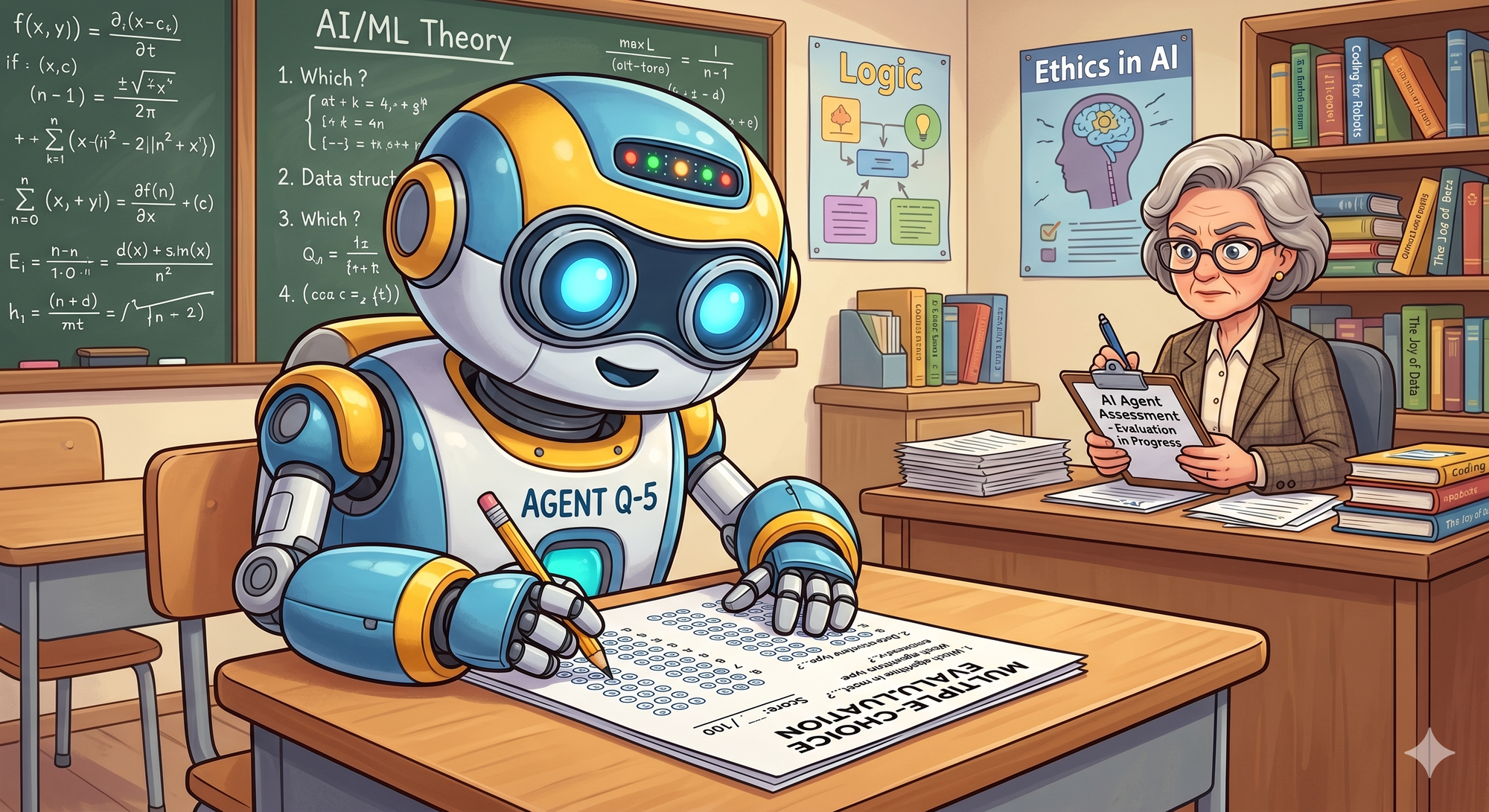

Agent evaluation is the process of testing whether an AI agent reliably completes the task it was built to do across real inputs, edge cases, and new versions.

For AI teams, agent evaluation is what turns “the demo worked” into “we can ship this with confidence.” It helps teams catch failures, compare versions, prevent regressions, and understand whether changes to prompts, models, tools, or workflows actually improve performance.

PromptLayer helps teams version, test, and monitor agents with evals, tracing, datasets, and regression testing.

Agent evaluation vs. LLM evaluation

LLM evaluation usually focuses on a single model response. You give the model an input, score the output, and decide whether it passed.

Agent evaluation is more complex because agents are multi-step systems. An agent may choose tools, call APIs, retrieve context, run a workflow, make intermediate decisions, and then produce a final answer.

That means you need to evaluate more than the final response. You also need to understand how the agent got there.

LLM evaluation asks: Did the model produce a good answer?

Agent evaluation asks: Did the agent complete the task correctly, reliably, and through the right steps?

Types of agent evaluation

Black-box evaluation

Black-box evaluation looks only at the final result.

You ask: did the agent complete the task?

Examples include checking whether the agent returned the right answer, produced valid JSON, completed a workflow, or followed the requested format.

This is the easiest place to start, but it does not always explain why an agent failed.

Trajectory evaluation

Trajectory evaluation looks at the steps the agent took.

For tool-using agents, this matters. An agent might produce a correct-looking answer while calling the wrong tool, skipping a required step, using bad retrieval results, or taking an unnecessarily expensive path.

Trajectory evaluation checks things like tool calls, tool arguments, step order, intermediate outputs, latency, and cost.

PromptLayer traces show span-level context for workflows, agents, tools, and multi-step logic, which makes this kind of evaluation easier to debug and repeat.

Component-level evaluation

Component-level evaluation tests individual parts of the agent workflow.

For example, you might evaluate whether the agent chose the right tool, generated a useful retrieval query, extracted the right fields, followed a schema, or avoided unsupported claims.

This is useful when the full task is hard to grade automatically, but specific decisions can be checked with code, validation rules, LLM-as-judge, or human review.

Agent evaluation metrics

Useful agent evaluation metrics include:

Task completion rate: How often the agent successfully completes the expected task.

Tool selection accuracy: How often the agent chooses the right tool or API.

Unsupported-claim rate: How often the agent makes claims that are not grounded in the provided context or retrieved information.

Latency and cost per step: How much time and money each step adds.

Regression pass rate: How many evaluation cases still pass after a prompt, model, or agent update.

These metrics help teams understand both quality and reliability.

How to evaluate an AI agent

Start by defining what “correct” means for your agent. Decide what the agent must do, what it must avoid, and which parts of the workflow can be checked automatically.

Next, build an evaluation dataset. Start with real or representative examples that cover common requests, edge cases, and known failures.

Then run black-box evaluations on the final output. Check task completion, formatting, safety, and correctness.

Once you have traces, add trajectory evaluation. Look at tool calls, intermediate steps, retrieval behavior, latency, and cost.

Finally, make evaluation part of your release process. Every time you update a prompt, model, or agent workflow, run your regression set and compare results against the previous version.

PromptLayer supports reusable datasets for evaluations, backtests, regression checks, and batch workflows.

How PromptLayer helps with agent evaluation

PromptLayer gives AI teams a practical way to version, test, and monitor agents before shipping them.

With PromptLayer, teams can trace agent runs, inspect spans, build evaluation datasets, run batch evaluations, backtest against production history, compare versions, and automatically trigger evaluations when new prompt versions are created.

PromptLayer also supports flexible evaluation pipelines, including code execution, human input, conversation simulation, equality checks, regex checks, and LLM assertions.

Instead of relying on manual review or one-off scripts, PromptLayer helps teams make agent evaluation a repeatable part of the development and release process.

FAQ

What is agent evaluation?

Agent evaluation is the process of testing whether an AI agent completes tasks correctly and reliably across different inputs, edge cases, and versions.

Why is agent evaluation important?

Agent evaluation helps teams catch failures, reduce regressions, improve reliability, and ship agent updates with more confidence.

How is agent evaluation different from LLM evaluation?

LLM evaluation usually scores a single response. Agent evaluation scores both the final output and the multi-step process the agent used to produce it.

What metrics should you use for agent evaluation?

Common metrics include task completion rate, tool selection accuracy, unsupported-claim rate, latency, cost, and regression pass rate.

How does PromptLayer help evaluate AI agents?

PromptLayer helps teams trace agent behavior, build evaluation datasets, run automated evaluations, compare versions, backtest against production history, and regression-test agents before production.