n8n alternatives for AI teams: build LLM workflows with prompt chaining

AI automation has become table stakes for engineering teams shipping production applications. What started as simple webhook triggers and API connectors has evolved into something far more demanding - teams now need orchestrate complex LLM calls, manage context windows, and chain prompts together in ways that traditional workflow tools never anticipated. The PromptLayer team has watched this shift closely, and the gap between what teams need and what legacy automation platforms offer keeps widening.

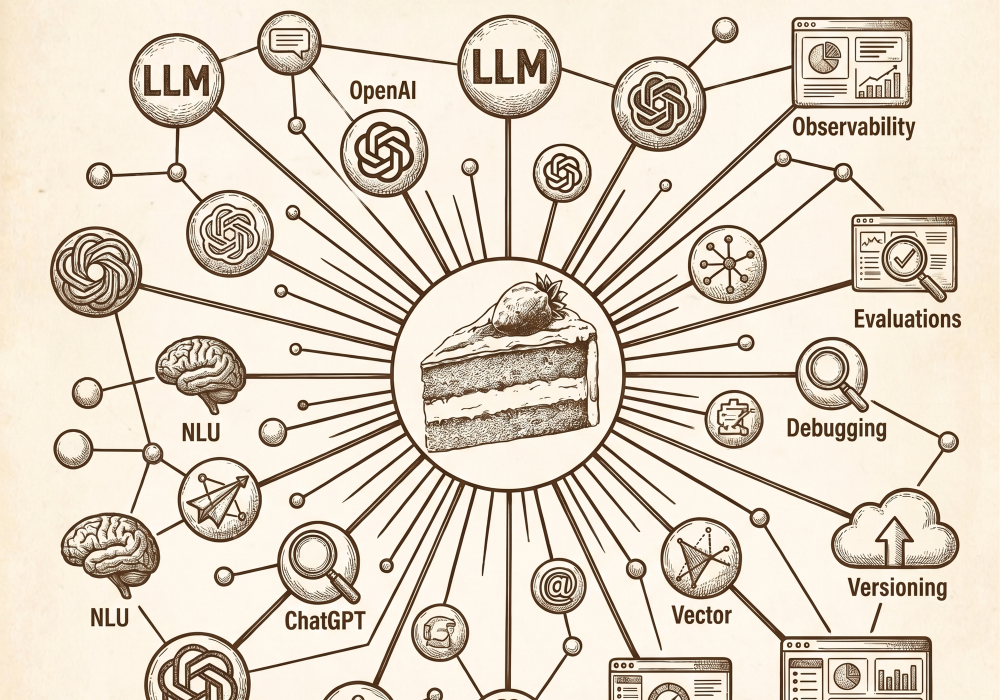

Tools like Zapier and n8n served us well for connecting SaaS applications, but they weren't built for the nuances of AI development. Prompt chaining - where the output of one LLM call feeds into the next - requires a different kind of infrastructure. You need version control for prompts, collaboration features for non-technical teammates, and evaluation frameworks to know if your chains actually work. That's the space we're focused on, and it's why more teams are looking beyond general-purpose automation.

AI pipeline automation is becoming its own category

The search volume tells the story. Terms like "ai agent builder" and "ai pipeline automation" are climbing fast, reflecting a real shift in how teams approach workflow construction. This isn't about connecting Slack to Google Sheets anymore - it's about autonomous systems that reason, retrieve, and respond.

The demand comes from a few converging pressures:

- Production complexity: Teams are moving past single-prompt prototypes into multi-step workflows that need reliability and observability.

- Collaboration gaps: Prompt engineering increasingly involves product managers, domain experts, and QA - not just developers.

- Evaluation needs: You can't just deploy a chain and hope it works. Teams need systematic ways to test outputs before they reach users.

Traditional automation platforms treat LLM calls as just another API endpoint. But anyone who's tried to debug a five-step prompt chain through Zapier knows that abstraction breaks down quickly. The tooling needs to understand what prompts are, how they relate to each other, and how to version them over time.

Evaluating low-code AI automation solutions

Low-code platforms promise speed, and for AI teams under pressure to ship, that promise is compelling. The appeal is obvious - visual builders let you prototype chains without writing boilerplate, and non-engineers can contribute to prompt design without blocking the development queue.

But not all low-code platforms handle LLM workflows equally. When evaluating options, consider these dimensions:

- Prompt-level versioning: track changes to individual prompts independently, or does every edit require redeploying the entire workflow?

- Collaboration model: How do non-technical teammates participate? Can they edit prompts, run evaluations, or review outputs without developer intervention?

- Deployment flexibility: Does the platform support staging environments, rollbacks, and gradual rollouts?

- Evaluation infrastructure: How do you test whether a chain performs well across edge cases before pushing to production?

Many platforms check the "low-code" box but fall short on the specifics that matter for AI-first teams. The visual builder is just the surface - what happens underneath determines whether you can actually maintain and improve your workflows over time.

Prompt chaining beats general-purpose automation for AI work

Zapier and similar tools excel at event-driven automation between established SaaS products. But prompt chaining requires something fundamentally different. You're not just passing data between services - you're managing an evolving conversation with a language model, where context, temperature, and prompt structure all influence outcomes.

We've found that AI-first workflow tools offer several advantages over general-purpose alternatives:

- Native prompt management: first-class objects with their own version history and metadata.

- Built-in evaluation: Run systematic tests across prompt variations without custom scripting.

- Team collaboration: Let product and domain experts contribute directly to prompt development.

- Cleaner abstractions: Design chains around LLM concepts rather than forcing AI into a webhook-and-trigger paradigm.

The result is faster iteration and fewer surprises in production. When your tooling understands prompts natively, debugging becomes straightforward and collaboration becomes natural.

Building effective workflows with PromptLayer

Implementing prompt chaining starts with decomposing your task into discrete steps. Each step should have a clear input, a well-defined prompt, and an expected output format that feeds cleanly into the next stage.

Practical tips for building chains that last:

- Start with the simplest possible chain that solves your problem, then add complexity only when needed.

- Use evaluation datasets early - even a handful of test cases catches obvious regressions.

- Involve non-engineers in prompt review cycles to catch blind spots developers miss.

- Version prompts independently so you can isolate which changes improved or degraded performance.

The goal is workflows that your whole team can understand, modify, and trust.

Choose tools that speak LLM, not just automation

AI automation has matured past the point where general-purpose platforms suffice. If you're building real LLM products, you want infrastructure that treats prompts, chains, and evaluations as first-class citizens - not a fragile set of webhooks glued together.

So start with your workflow requirements, then work backwards. Map the steps, decide who needs to collaborate, and set expectations for testing and rollout. If a platform makes versioning and debugging feel harder as your chain grows, that's your signal to move on.