MCP vs API: Architecture Patterns for AI Agents and Applications

What actually powers the AI workflows teams are building today?

Behind every agent action, data lookup, prompt evaluation, and automated workflow sits a collection of protocols and interfaces making it possible. Two of the most important, and often confused, are MCPs and APIs.

At PromptLayer, we encounter both regularly while building prompt engineering infrastructure. Understanding when to use each one, and how they work together, has shaped how we think about AI system design.

APIs enable software systems to communicate directly with each other. The Model Context Protocol (MCP) helps AI applications connect to tools, context, and data sources in a standardized way.

PromptLayer is a useful example of how these two layers work together. Developers can use the PromptLayer API to log requests, fetch prompt templates, manage evaluations, and build deterministic product workflows. But when an AI assistant like Claude or ChatGPT needs to inspect prompt history, retrieve traces, compare versions, or run evaluation workflows through natural language, the MCP provides a more agent-friendly interface on top of those capabilities.

What the MCP Actually Does

The Model Context Protocol (MCP) is an open protocol that standardizes how AI applications connect to external tools, data sources, prompts, and context.

Think of the MCP as a shared interface between an AI application and the systems it needs to use.

The MCP handles tasks like:

- Tool access

- Context retrieval

- Prompt workflows

- Capability discovery

That discovery process is one of the biggest differences between the MCP and a traditional API.

With an API, a developer reads the documentation, chooses an endpoint, writes the integration, and decides exactly when to call it.

With the MCP, the server tells the AI client what capabilities are available. Those capabilities can include tools the model may call, resources it can read, and prompts it can reuse.

In a tool like Claude Code, this happens through an MCP client. Claude connects to one or more MCP servers, such as a GitHub server, Slack server, filesystem server, or PromptLayer MCP server.

Once connected, Claude can inspect the tools exposed by those servers, including each tool’s name, description, and expected inputs.

For example, if a connected PromptLayer MCP server exposes tools for searching request logs, retrieving traces, listing evaluations, or running reports, Claude can use those tools when a user asks:

“Why did this prompt version perform worse last week?”

Claude can identify the relevant PromptLayer tool, pass the required arguments, receive structured results, and use that information in its response.

The underlying work may still rely on PromptLayer APIs, but the MCP gives Claude a standardized way to discover and use those actions.

How APIs Enable Software Communication

Application Programming Interfaces (APIs) define how software components talk to each other, specifying the requests one system can make and the responses it should expect.

Where the MCP standardizes how AI applications access tools and context, APIs connect software systems directly.

Consider how a weather app works.

Your phone sends a request to a weather service’s API, which returns current conditions in a predictable structure.

A weather MCP server would sit one layer higher.

Instead of your app calling a weather endpoint directly, Claude could connect to a weather MCP server that exposes a tool like get_current_weather.

When a user asks:

“Do I need an umbrella in Brooklyn today?”

Claude can recognize that the weather tool is relevant, pass in the location, receive the forecast, and answer in plain language. The weather API still provides the data.

The MCP makes that data usable by the AI assistant through tool discovery and structured tool calls.

APIs appear everywhere in modern software:

- Third-party integrations

- Microservices architecture

- Public data access

- Internal tooling

REST APIs, GraphQL, and gRPC are all standardized ways for software to request and receive information.

How MCPs and APIs Work Together

The distinction becomes clearer when you consider what problem you’re actually solving.

MCPs answer:

“How do we let an AI application discover and use external tools or context?”

APIs answer:

“How do we exchange data and functionality between software systems?”

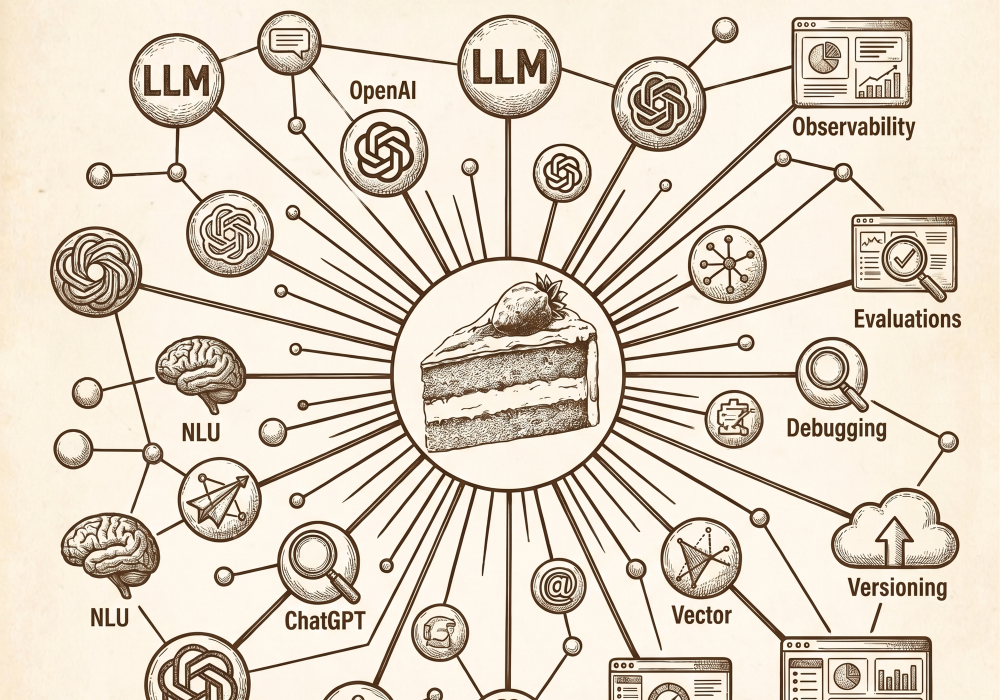

PromptLayer is a good example of how the two work together.

The PromptLayer API is the direct interface for software systems. It is what an application would use to log LLM requests, fetch prompt templates, manage datasets, or pull evaluation results inside a product workflow.

The PromptLayer MCP server is the AI-facing layer: it lets MCP-compatible clients like Claude, ChatGPT, or Wrangler AI interact with PromptLayer through discoverable tools.

Instead of manually wiring every PromptLayer endpoint into an assistant, teams can expose selected PromptLayer actions through the MCP, including:

- Searching request logs

- Retrieving traces

- Listing prompt templates

- Running reports

- Working with evaluation datasets

If your production app needs to fetch a prompt template before calling an LLM, use the API.

If your AI assistant needs to investigate why a prompt failed, compare versions, or summarize recent evaluation results, the MCP is often the cleaner interface.

A well-designed AI system typically uses both.

APIs expose the underlying business logic and data.

MCPs package the right parts of that functionality so AI applications can understand what is available, decide when to use it, and call it through a consistent protocol.

Final Thoughts

MCPs and APIs serve complementary but distinct roles in modern AI infrastructure.

MCPs provide a standardized way for AI applications to access tools, prompts, resources, and context.

APIs provide the software interface for exchanging information and triggering functionality between systems.

Most production AI systems need both layers working together.

Use APIs when you need direct, deterministic software-to-software communication.

Use the MCP when you want an AI application or agent to discover and use external capabilities through a shared protocol.

The cleanest architecture often uses both.

APIs remain the source of truth for business logic and data access.

MCP servers expose selected API-backed capabilities to AI applications in a safer, more discoverable format.

Get that right, and your architecture will be cleaner, more maintainable, and easier to reason about.