Composer: What Cursor's New Coding Model Means for LLMs

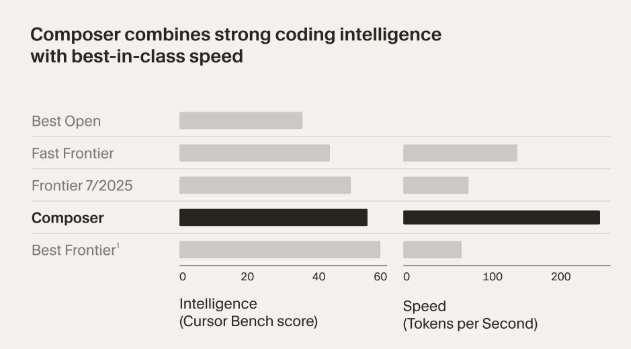

Cursor just released Composer, an AI model that completes coding tasks in under 30 seconds, 4× faster than comparable systems, and it's trained inside real codebases using reinforcement learning. This isn't merely an incremental improvement to existing AI coding assistants; Composer represents a fundamental shift from general-purpose LLMs applied to code toward specialized models trained within development environments.

This release signals a new direction for AI coding tools, domain-specific training, agentic workflows, and IDE-native intelligence, that could reshape how LLMs are built and deployed across the entire industry.

What Is Composer?

Composer is Cursor's first proprietary coding LLM, released as part of Cursor 2.0 in October 2025. Unlike general language models adapted for coding tasks, Composer was purpose-built for "agentic" coding: it plans, writes, tests, and reviews code autonomously, functioning more like a collaborative teammate than a suggestion engine.

The performance statistics are striking: Composer achieves approximately 250 tokens per second throughput, with most tasks completing in under 30 seconds. It's a design choice that keeps developers engaged in the coding process rather than waiting for AI responses.

What sets Composer apart is its training methodology. Rather than learning from static code repositories, the model was trained with reinforcement learning inside live codebases using real development tools, file editing, semantic search, and terminal commands. This approach allows Composer to understand the actual process of software development.

Why Speed and "Agentic" Behavior Matter

The emphasis on speed isn't merely about reducing wait times. Fast inference keeps developers "in the loop" during iteration cycles, maintaining their mental context and flow state. When an AI assistant takes minutes to complete a task, developers often context-switch to other activities, disrupting their productivity and thought processes.

Composer handles multi-step tasks autonomously. For example, when asked to "add user login flow," Composer plans the implementation, searches the repository for relevant files, makes coordinated edits across multiple components, and ensures consistency with existing patterns.

This contrasts sharply with traditional LLMs that operate in a query-response pattern. Composer functions as a true coding collaborator, capable of breaking down complex requirements into actionable steps and executing them with minimal human intervention.

How Composer Was Trained Differently

The training approach for Composer represents a significant departure from conventional LLM development. Composer was trained in situ, inside real coding environments with access to actual development tools.

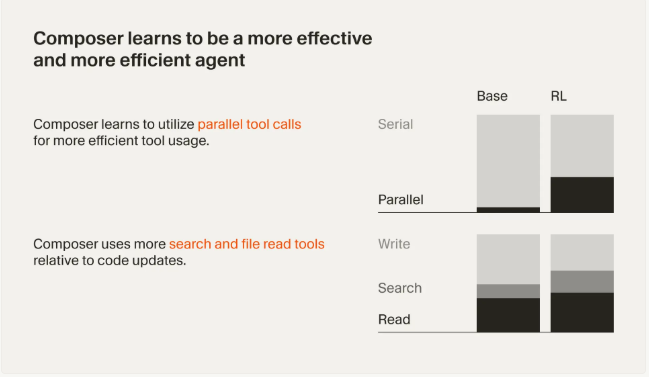

This reinforcement learning approach, implemented on custom infrastructure using thousands of GPUs, allowed Composer to learn emergent behaviors that weren't explicitly programmed. The model discovered how to:

- Run unit tests and interpret results

- Fix linting errors automatically

- Conduct multi-step searches across codebases

- Make parallel edits for efficiency

The architecture itself, a Mixture-of-Experts model, was chosen specifically for fast, low-precision inference using MXFP8 optimization. This design choice prioritizes speed without sacrificing the quality needed for practical coding tasks. It's a deliberate trade-off that recognizes responsiveness as equally important as raw intelligence in developer tools.

The Multi-Agent Coding Paradigm

Cursor 2.0 fundamentally reimagines the IDE experience by centering it around AI agents rather than manual file editing. Developers can run up to 8 isolated agents in parallel on the same task, each working in its own git worktree or remote VM. This parallel processing allows for comparing multiple solutions and selecting the best approach, a strategy that often improves output quality, especially for complex problems.

The new IDE includes several features that support this agent-centric workflow:

- Embedded browser for testing: Agents can interact with live application UIs, iteratively refining their code until tests pass

- Aggregated diff review: All proposed changes across files appear in a single view for easy review

- Voice control: Developers can initiate and manage agent tasks through speech commands

- Sandboxed terminals: Safe execution environments for running agent-generated code

Crucially, developers retain oversight throughout the process. They can preview all diffs before applying changes, ensuring that AI-generated code meets their standards and requirements.

What This Means for the LLM Field

Composer's release highlights several critical trends that will likely shape the future of LLMs:

Domain Specialization Over General Models

The success of a narrowly focused model like Composer demonstrates that specialized LLMs can outperform larger general models in specific domains. While GPT-5 or Claude Sonnet might exhibit superior general reasoning, Composer's combination of speed and contextual understanding makes it more practical for actual coding tasks..

Speed vs. Intelligence Tradeoff

Composer's emphasis on sub-30-second completions underscores that inference latency is now critical. A model that's slightly less "intelligent" but 4× faster may be more valuable in practice than a brilliant but slow alternative. This will likely drive increased research into high-throughput inference techniques, including quantization, specialized hardware, and efficient architectures.

Integration into Developer Workflows

The tight coupling between Composer and the Cursor IDE demonstrates that future LLMs will be IDE-native, not just API endpoints. Deep integration with existing tools, version control systems, and development workflows will become table stakes for AI coding assistants.

Observability and Prompt Management

As specialized models like Composer become production-critical tools, observability infrastructure becomes equally important. Teams deploying AI coding assistants need visibility into model performance, prompt effectiveness, and cost management across thousands of daily interactions. Tools like PromptLayer have emerged to address this gap, providing prompt versioning, analytics, and monitoring capabilities that help engineering teams understand how their AI assistants perform in real-world scenarios. This layer of observability will be essential as more domain-specific models move from experimental tools to core development infrastructure, enabling teams to optimize prompts, track regressions, and manage costs at scale.

Real-World Caveats

A recent study found that experienced developers sometimes slowed down when using AI assistants, spending additional time reviewing and correcting AI-generated suggestions. The code produced was often "directionally correct, but not exactly what's needed," requiring careful human oversight.

Composer faces several practical challenges:

- Accuracy limitations: While fast, Composer isn't the "smartest" model available. GPT-5 and Claude Sonnet still outperform it in raw intelligence, though at the cost of speed

- Platform lock-in: Composer only runs within Cursor's proprietary IDE, limiting adoption for teams invested in other development environments

- Security considerations: As a closed-source model, Composer may raise concerns for organizations requiring on-premises deployment or complete code isolation

These limitations underscore that Composer augments rather than replaces human developers. The technology excels at reducing boilerplate work and accelerating common tasks, but critical thinking and code review remain essential.

Reimagining AI-Assisted Development

Composer demonstrates a future where LLMs serve as active coding partners. By combining specialized training, environment-aware learning, and aggressive speed optimization, Cursor has created a model that changes how developers interact with AI.

The key takeaway is clear: specialized, environment-trained models + agentic workflows + speed optimization = the new frontier for AI-assisted development**. This is about reimagining the development process itself.

For the broader industry, Composer's release signals an inflection point. We should expect more domain-specific LLMs trained with reinforcement learning and real tools, pushing beyond the limitations of general-purpose models. The future of AI coding assistance isn't just smarter models it's models that understand the craft of software development itself.