Best Practices for Evaluating Back-and-Forth Conversational AI

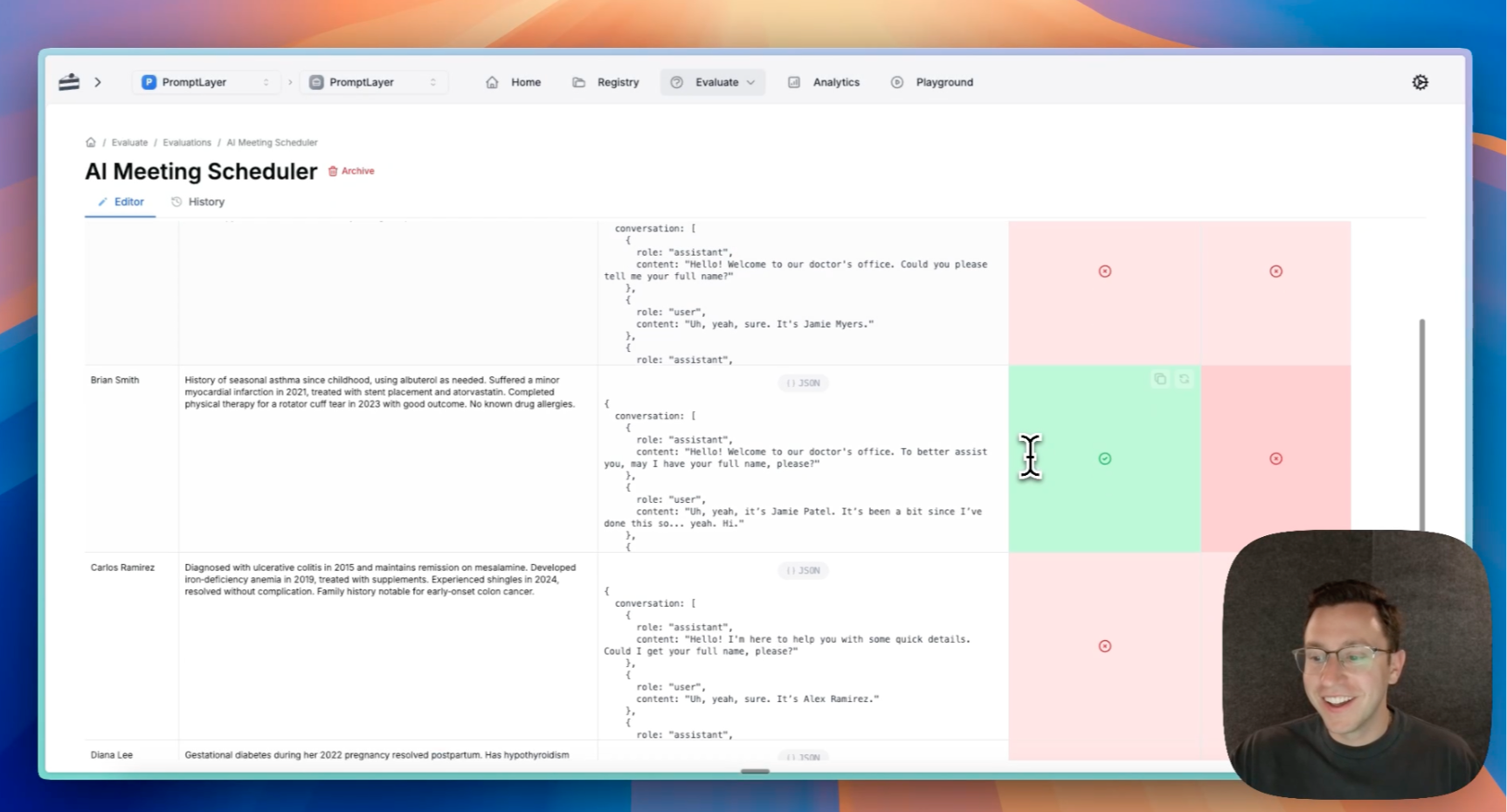

Building conversational AI agents is hard. Ensuring they perform reliably across diverse scenarios is even harder. When your agent needs to handle multi-turn conversations, maintain context, and achieve specific goals, traditional single-prompt evaluation methods fall short. In this guide, I'll walk you through best practices for evaluating conversational